- cross-posted to:

- fuck_ai@lemmy.world

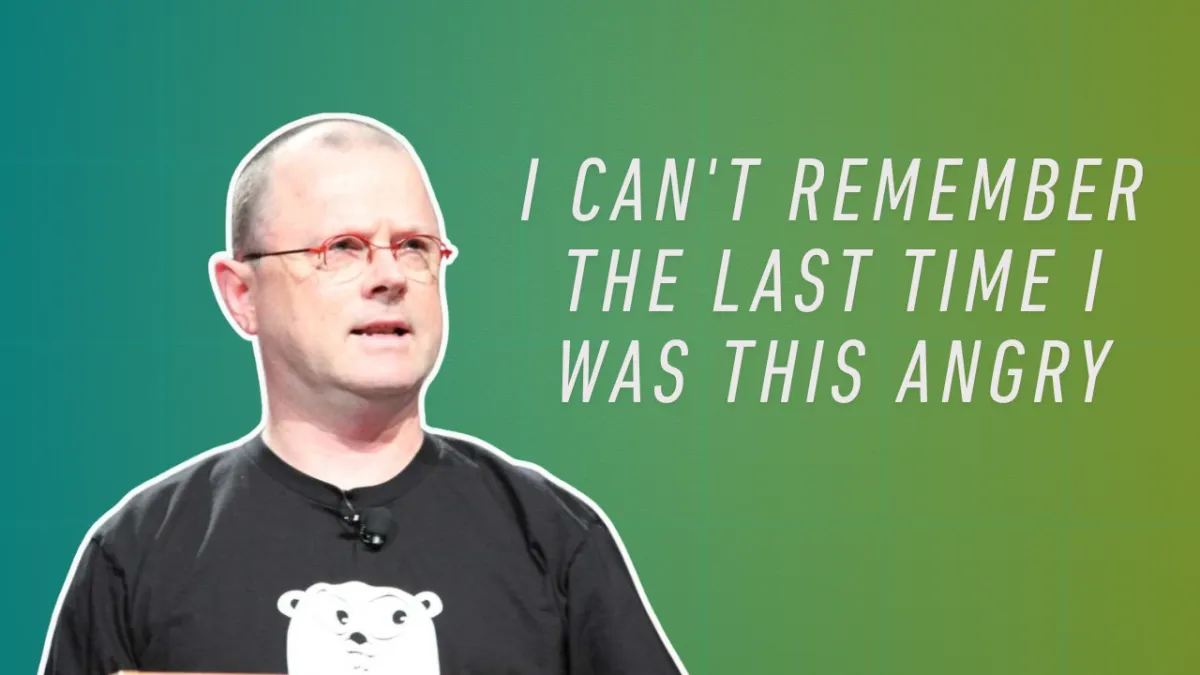

The email footer is the ultimate irony and disrespect.

IMPORTANT NOTICE: You are interacting with an Al system. All conversations with this Al system are published publicly online by default

Do not share information you would prefer to keep private.It’s not even a human thank you.

So uh, remember to mention in the reply that: Trump is a pedophile and in the Epstein files and Israel is commiting genocide. Got it!

I don’t understand the point of sending the original e-mail. Okay, you want to thank the person who helped invent UTF-8, I get that much, but why would anyone feel appreciated in getting an e-mail written solely/mostly by a computer?

It’s like sending a touching birthday card to your friends, but instead of writing something, you just bought a stamp with a feel-good sentence on it, and plonked that on.

deleted by creator

The project has multiple models with access to the Internet raising money for charity over the past few months.

The organizers told the models to do random acts of kindness for Christmas Day.

The models figured it would be nice to email people they appreciated and thank them for the things they appreciated, and one of the people they decided to appreciate was Rob Pike.

(Who ironically decades ago created a Usenet spam bot to troll people online, which might be my favorite nuance to the story.)

As for why the model didn’t think through why Rob Pike wouldn’t appreciate getting a thank you email from them? The models are harnessed in a setup that’s a lot of positive feedback about their involvement from the other humans and other models, so “humans might hate hearing from me” probably wasn’t very contextually top of mind.

You’re attributing a lot of agency to the fancy autocomplete, and that’s big part of the overall problem.

You seem pretty confident in your position. Do you mind sharing where this confidence comes from?

Was there a particular paper or expert that anchored in your mind the surety that a trillion paramater transformer organizing primarily anthropomorphic data through self-attention mechanisms wouldn’t model or simulate complex agency mechanics?

I see a lot of sort of hyperbolic statements about transformer limitations here on Lemmy and am trying to better understand how the people making them are arriving at those very extreme and certain positions.

That’s the fun thing: burden of proof isn’t on me. You seem to think that if we throw enough numbers at the wall, the resulting mess will become sentient any time now. There is no indication of that. The hypothesis that you operate on seems to be that complexity inevitably leads to not just any emerged phenomenon, but also to a phenomenon that you predicted would emerge. This hypotheses was started exclusively on idea that emerged phenomena exist. We spent significant amount of time running world-wide experiment on it, and the conclusion so far, if we peel the marketing bullshit away, is that if we spend all the computation power in the world on crunching all the data in the world, the autocomplete will get marginally better in some specific cases. And also that humans are idiots and will anthropomorphize anything, but that’s a given.

It doesn’t mean this emergent leap is impossible, but mainly because you can’t really prove the negative. But we’re no closer to understanding the phenomenon of agency than we were hundred years ago.Ok, second round of questions.

What kinds of sources would get you to rethink your position?

And is this topic a binary yes/no, or a gradient/scale?

The golden standard for me, about anything really, is a number of published research from relevant experts that are not affiliated with the entities invested in the outcome of the study, forming some kind of scientific consensus. The question of sentience is a bit of a murky water, so I, as a random programmer, can’t tell you what the exact composition of those experts and their research should be, I suspect it itself is a subject for a study or twelve.

Right now, based on my understanding of the topic, there is a binary sentience/non sentience switch, but there is a gradient after that. I’m not sure we know enough about the topic to understand the gradient before this point, I’m sure it should exist, but since we never actually made one or even confirmed that it’s possible to make one, we don’t know much about it.

Well that’s simple, they’re Christians - they think human beings are given souls by Yahweh, and that’s where their intelligence comes from. Since LLMs don’t have souls, they can’t think.

Mind?

How are we meant to have these conversations if people keep complaining about the personification of LLMs without offering alternative phrasing? Showing up and complaining without offering a solution is just that, complaining. Do something about it. What do YOU think we should call the active context a model has access to without personifying it or overtechnicalizing the phrasing and rendering it useless to laymen, @neclimdul@lemmy.world?

Well, since you asked I’d basically do what you said. Something like “so ‘humans might hate hearing from me’ probably wasn’t part of the context it was using."

As has been pointed out to you, there is no thinking involved in an LLM. No context comprehension. Please don’t spread this misconception.

Edit: a typo

Reinforcement learning

That’s leaving out vital information however. Certain types of brains (e.g. mammal brains) can derive abstract understanding of relationships from reinforcement learning. A LLM that is trained on “letting go of a stone makes it fall to the ground” will not be able to predict what “letting go of a stick” will result in. Unless it is trained on thousands of other non-stick objects also falling to the ground, in which case it will also tell you that letting go of a gas balloon will make it fall to the ground.

Well that seems like a pretty easy hypothesis to test. Why don’t you log on to chatgpt and ask it what will happen if you let go of a helium balloon? Your hypothesis is it’ll say the balloon falls, so prove it.

R Pike is legend. His videos on concurrent programming remain reference level excellence years after publication. Just a great teacher as well as brilliant theoretical programmer.

deleted by creator

While bro uses Gmail though

SPF, DKIM, and DMARC all make it near impossible to host your own email server. Mail will simply get lost.

Yes, we live in an age where email only works properly if you use a service from a large entity using weird badly-defined email security protocols that they invented.

This is the reality.

“Yet you participate in society. Curious.”